The Azure Data Lake Store is an integral component for creating a data lake in Azure as it is where data is physically stored in many implementations of a data lake. Under the hood, the Azure Data Lake Store is the Web implementation of the Hadoop Distributed File System (HDFS). Meaning that files are split up and distributed across an array of cheap storage.

What this blog will go into is the physical storage of files in the Azure Data Lake Store and then best practices, which will utilise the framework.

Azure Data Lake Store File Storage

As mentioned, the Azure Data Lake Store is the Web implementation of HDFS. Each file you place into the store is split into 250MB chunks called extents. This enables parallel read and write. For availability and reliability, extents are replicated into three copies. As files are split into extents, bigger files have more opportunities for parallelism than smaller files. If you have a file smaller than 250MB it is going to be allocated to one extent and one vertex (which is the work load presented to the Azure Data Lake Analytics), whereas a larger file will be split up across many extents and can be accessed by many vertexes.

The format of the file has a huge implication for the storage and parallelisation. Splittable formats – files which are row oriented, such as CSV – are parallelizable as data does not span extents. Non-splittable formats, however, – files what are not row oriented and data is often delivered in blocks, such as XML or JSON – cannot be parallelized as data spans extents and can only be processed by a single vertex.

In addition to the storage of unstructured data, Azure Data Lake Store also stores structured data in the form of row-oriented, distributed clustered index storage, which can also be partitioned. The data itself is held within the “Catalog” folder of the data lake store, but the metadata is contained in the data lake analytics. For many, working with the structured data in the data lake is very similar to working with SQL databases.

Azure Data Lake Store Best Practices

The best practices generally involve the framework as outlined in the following blog: https://adatis.co.uk/Shaping-The-Lake-Data-Lake-Framework

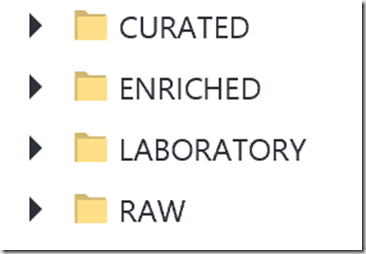

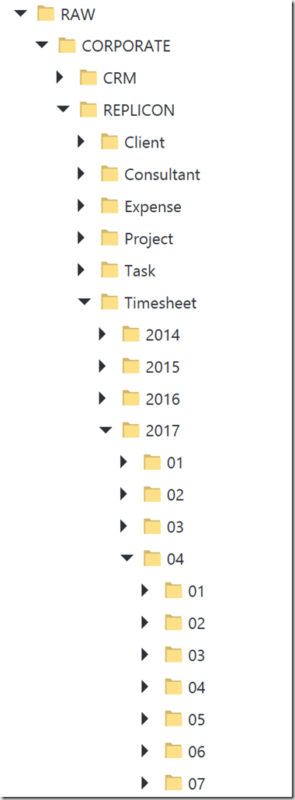

The framework allows you to manage and maintain your data lake. So, when setting up your Azure Data Lake Store you will want to initially create the following folders in your Root

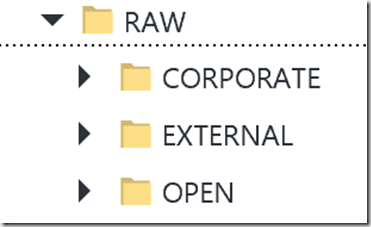

Raw is where data is landed in directly from source and the underlying structure will be organised ultimately by Source.

Source is categorised by Source Type, which reflects the ultimate source of data and the level of trust one should associate with the data.

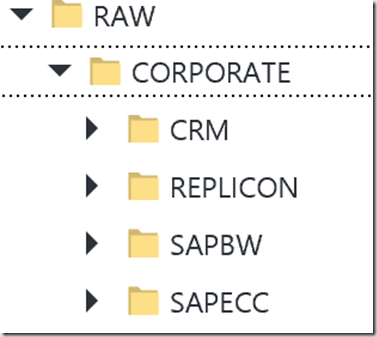

Within the Source Type, data is further organised by Source System.

Within the Source System, the folders are organised by Entity and, if possible, further partitioned using the standard Azure Data Factory Partitioning Pattern of Year > Month > Day etc., as this will allow you to achieve partition elimination using file sets.

The folder structure of Enriched and Curated is organised by Destination Data Model. Within each Destination Data Model folder is structured by Destination Entity. Enriched or Curated can either be in the folder structure and / or within the Database.

Interesting Ust!

Where did you find the info on the size of extents? I am looking for this indept info.

Regards,

Tieme