The Problem

My current project involves Azure’s Data Lake Store and Analytics. We’re using the SSIS Azure Feature Pack‘s Azure Data Lake Store Destination to move data from our clients on premise system into the Lake, then using U-SQL to generate a delta file which goes on to be loaded into the warehouse. U-SQL is a “schema-on-read” language, which means you need a consistent and predictable format to be able to define the schema as you pull data out.

We ran in to an issue with this schema-on-read approach, but once you understand the issue, it’s simple to rectify. The Data Lake Store Destination task does not use the same column ordering which is shown in the destination mapping. Instead, it appears to rely on an underlying column identifier. This means that if you apply any conversions to a column in the data flow, this column will automatically be placed at the end of file- taking away the predictability of the file format, and potentially making your schema inconsistent if you have historic data in the Lake.

An Example

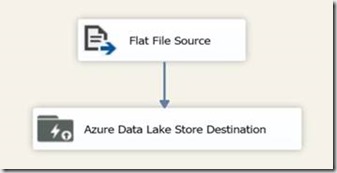

Create a simple package which pulls data from a flat file and moves it into the Lake.

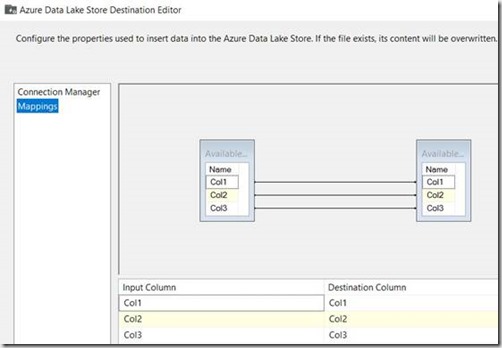

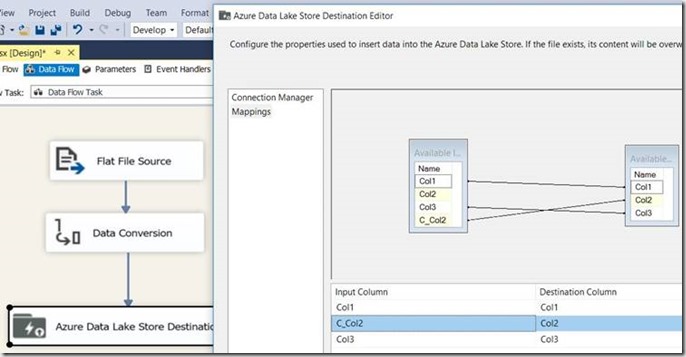

Mappings of the Destination are as follows:

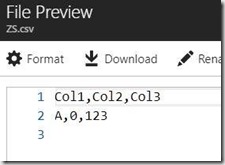

Running the package, and viewing the file in the Lake gives us the following (as we’d expect, based on the mappings):

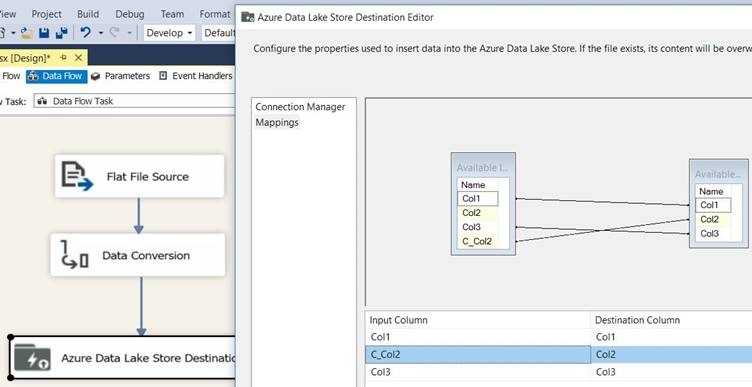

Now add a conversion task – the one in my package just converts Col2 to a DT_I4, update the mappings in the destination, and run the package.

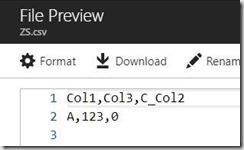

Open the file up in the Lake again, and you’ll find that Col2 is now at the end and contains the name of the input column, not the destination column:

The Fix

As mention in my “The Problem” section, the fix is extremely simple – just handle it in your U-SQL by re-ordering the columns appropriately during extraction! This article is more about giving a heads up and highlighting the problem, than a mind-blowing solution.

Introduction to Data Wrangler in Microsoft Fabric

What is Data Wrangler? A key selling point of Microsoft Fabric is the Data Science

Jul

Autogen Power BI Model in Tabular Editor

In the realm of business intelligence, Power BI has emerged as a powerful tool for

Jul

Microsoft Healthcare Accelerator for Fabric

Microsoft released the Healthcare Data Solutions in Microsoft Fabric in Q1 2024. It was introduced

Jul

Unlock the Power of Colour: Make Your Power BI Reports Pop

Colour is a powerful visual tool that can enhance the appeal and readability of your

Jul

Python vs. PySpark: Navigating Data Analytics in Databricks – Part 2

Part 2: Exploring Advanced Functionalities in Databricks Welcome back to our Databricks journey! In this

May

GPT-4 with Vision vs Custom Vision in Anomaly Detection

Businesses today are generating data at an unprecedented rate. Automated processing of data is essential

May

Exploring DALL·E Capabilities

What is DALL·E? DALL·E is text-to-image generation system developed by OpenAI using deep learning methodologies.

May

Using Copilot Studio to Develop a HR Policy Bot

The next addition to Microsoft’s generative AI and large language model tools is Microsoft Copilot

Apr