Last week we attended the Minds Mastering Machines (MCubed) conference at the QEII Centre in London. MCubed is an artificial intelligence (AI), machine learning (ML) and analytics conference, featuring speakers on the front line of AI research – in academia, start-ups and big tech companies, such as Facebook and Adobe.

Besides the great food and fantastic location; the conference provided a window into the current state of research in the industry and the challenges associated with applying them in the business world.

In this blog post I will summarise some of the key themes and discussion points at the event.

Explainable AI

The majority of talks focused on using Deep Learning and Neural Network techniques to classify unstructured data, such as images, speech, video and text. Although these methods can have incredibly powerful predictive accuracy; they are black box algorithms, meaning that they are very difficult to interpret and understand how they came to a decision. When building a machine learning model, there is a trade-off to consider between accuracy and interpretability.

In general, “classic machine learning” techniques, such as regression, Bayesian and tree based methods offer high interpretability, but lack in accuracy, especially on non-tabular, unstructured data. However, for more typical business-related scenarios, for example, predicting customer churn, understanding how the AI came to a decision is vital (plus accuracy can be very high). This is especially true in a GDPR world, were individuals have the right to an explanation of how an algorithm came to its decision.

‘Explainable AI’ (XAI) is a field now emerging to improve oversight and transparency of black box algorithms. As a result, new techniques, such as LIME scores and SHAP scores have been developed that provide insight into how each feature influences the model’s prediction on a sample by sample basis. The field is rapidly growing and will become more prominent as AI has more influence on our daily lives. For more information, see this article by Forbes.

Operational AI and Decision Intelligence

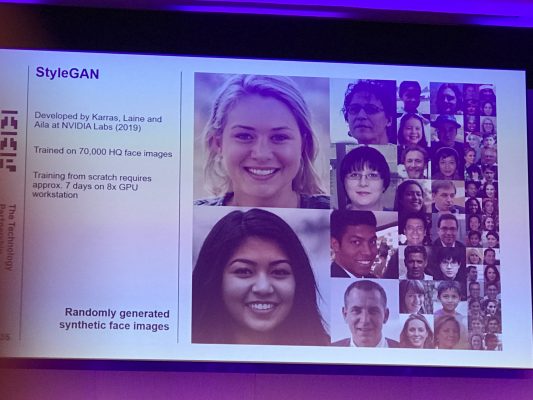

Although current AI research is very impressive, especially the work surrounding Generative Adversarial Networks (GAN’s) for Computer Vision (see image below), there is some way to go until we bear the fruits across the real world and business.

At the moment, most AI and ML projects fail to deliver, and there were many reasons discussed at the conference for why this is the case. In summary, the field is still in its infancy and is still dominated by academic thinking.

On the one side you have technical limitations, such as a lack of access to large volumes of high quality data; lack of standardised processes in development; lack of scalable solutions across organisations; lack of productionisation of models; and expensive computational costs.

On the other, you have non-technical reasons, including typical organisational challenges of politics, hierarchy and collaboration. Furthermore, because data science and AI projects are inherently experimental, they are risky in nature and there is no guarantee of success.

To combat this, there is a large desire to make AI more operational. This means incorporating best practices from software engineering like Agile development, DevOps, CI/CD and version control, while recognising there is a difference between the two cultures. It includes democratising AI and improving user experience to make it more accessible to less technical users. Furthermore, it involves productionising models, developing frameworks and ensuring ongoing model management.

Decision Intelligence was the term used by keynote speaker Dr. Lorien Pratt to explain how AI needs to augmented with management science, neuroscience and social sciences, such as psychology and economics, in order to be truly operational and revolutionary. This means thinking about decision-making, actions and outcomes early on. The term ‘Decision Intelligence’ is making some headway and was recently added to the Gartner hype cycle for 2019.

Conclusion

To conclude, AI and machine learning is still an immature field and there is still work to be done before it is operational within business. The latest research and developments in deep learning are exciting, but interpretability needs to be considered and further developed.

I want to express appreciation to the writer just for rescuing me from this particular dilemma. Right after looking out through the world-wide-web and seeing views which were not beneficial, I figured my life was done. Existing without the presence of approaches to the difficulties you have sorted out all through your main article is a serious case, and ones that might have adversely affected my career if I hadn’t discovered your blog post. That capability and kindness in dealing with all the stuff was excellent. I don’t know what I would’ve done if I hadn’t come upon such a step like this. I can also at this point look forward to my future. Thanks a lot very much for your expert and sensible guide. I will not think twice to refer your web site to any individual who would like counseling on this matter.