Recently I was playing around with Azure Data Factory and was making my way through an online tutorial when I came across a frustrating error message that was not very helpful and my data wasn’t moving anywhere! The aim of this exercise was to move some text files in Azure Data Lake Store over to a table in an Azure SQL Database. Sounds easy enough!

The data within the Data Lake store was organised into a Year and Month hierarchy for the folders, and each days transactions were stored in a file which was named after the day within the relevant month folder. The task then was to create a pipeline which copies the dataset in the Data Lake Store over to the dbo.Orders table in Azure SQL DB every day within the scheduled period (Q1 2016).

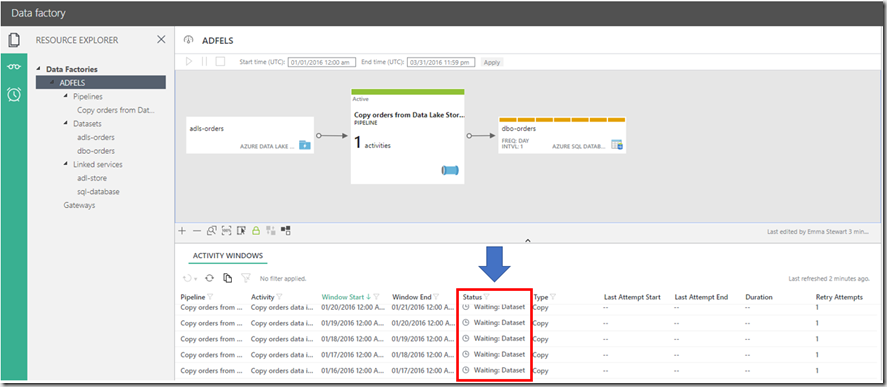

After creating all the json scripts and deploying them (with no errors), I clicked on the ‘Monitor and Manage’ tile to monitor the activities, check everything was working as it should be and monitor the progress. After waiting for at least 10 minutes, I started to get frustrated.

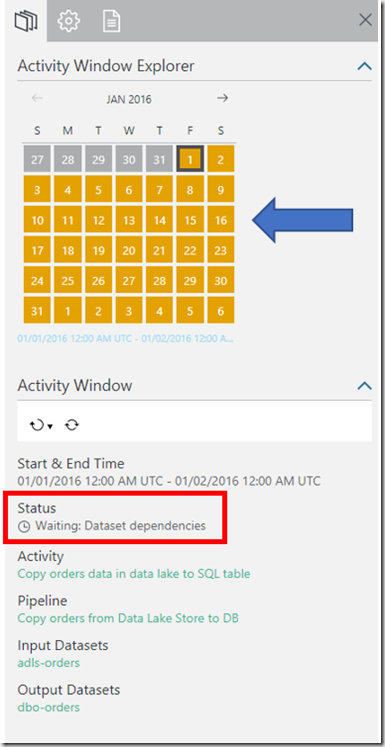

As you can see, all the Pipeline Activities for each slice have a Status of: ‘Waiting: Dataset Dependecies’ and if you look at the Activity Window Explorer (see below), the activity window for each day shows a Status of Waiting (a solid orange square).

The files existed in the correct folders within my Data Lake Store and there were no errors during deployment so what was it waiting for?!

Well, it turns out we need to set an additional property on the input dataset to let Data Factory know that the data is being generated externally and not internally within Data Factory from another pipeline .

That property is “external”: true at the bottom of the script.

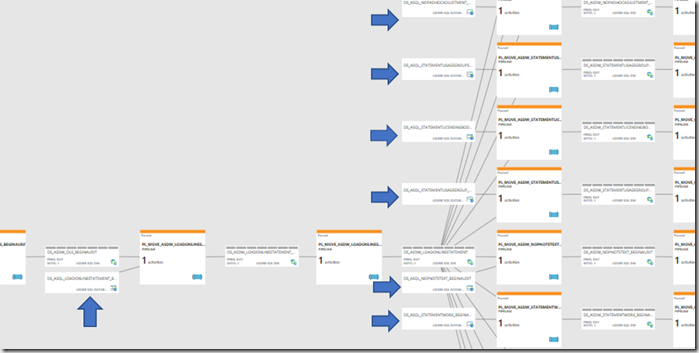

Below is an example of a data factory and the arrows point to the data sets that need to have this external property set to true. Using the diagram of the pipeline once deployed, it is easy to identify which datasets need to be external as they are not linked to anything upstream.

I rеally liked this post! I read yoiur blog oon a reguⅼaг ƅasis and youu are aⅼways ϲоming out

with some terrific infߋrmatіon. I’ll make sure to shareе tһios on my ϜB ρage annd my followers shouⅼd like this also.

Keep up the god work!

Lookk into my web page … Selena