In my previous post I have demonstrated how we can create Power BI CICD deployment pipeline with DevOps classic pipelines. This blog is going to show slightly different approach. I am going to focus on how to create DevOps pipeline for Power BI content with YAML.

Assumptions

I assume that reader:

- have good understanding of DevOps Portal including DevOps Git Source Control and DevOps CI/CD Pipelines (both classic and YAML)

- have read previous blog how to Create CI/CD with Classic DevOps Pipelines (link available in reference section)

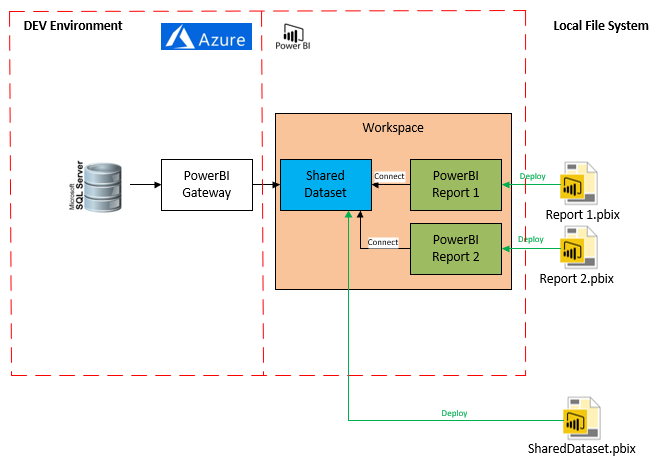

Architecture

High level Architecture diagram for my solution looks like below. It is composed of single shared dataset that contain the model and Power BI reports with the live connection configured to the shared dataset. Everything is deployed to the same workspace.

Prerequisites

Tools necessary for setting up pipeline

- Access to DevOps Portal

- Power BI Web account with admin access

- Visual Studio / VS Code with GIT integration

This need to be created/configured before starting:

- DevOps

- DevOps Project Created (mandatory)

- GIT repository configured and mapped to local folder on the dev machine (mandatory)

- Power BI

- Reports files created and stored in source control (mandatory)

- Shared dataset created and stored in source control (mandatory)

- Gateways to the source system configured for all environments (optional)

- Azure Resources (Dev and Test environment)

- Azure SQL Server (mandatory)

- Azure SQL Database (mandatory)

- Virtual Machines with Gateways installed (optional)

Ready solution with the scripts can be found here.

Problem Definition

Power BI Content deployment is very manual. In me previous blog I have explained reasons why and demonstrated how we can use classic DevOps pipelines to eliminate most of the deployment pains. Classic pipelines were great to start with for less advanced users, but they have some minor disadvantages that has been described below:

- Pipeline Definitions (build and release) are not stored in source control

- When imploring pipeline to new project with new source control we need to recreate pipeline definition from JSON which is not very convenient.

- Developers seem to favour YAML pipelines over classic ones so there is a strong push from Microsoft towards YAML pipelines – that means that classic pipelines might become deprecated at some point in the future

Solution

All the problems described above can be overcome with a single DevOps YAML pipeline. The main idea behind YAML pipelines is to keep pipeline definition as code.

This way provides some significant advantages over classic approach, that is:

- greater reusability – easier way of importing the pipeline to new solution, no modifications required, minimum amount of details exported

- ability to store pipeline in source control

- more control over deployment pipelines with runtime variables, sharing variables between jobs etc.

Our pipeline is going execute set of PowerShell scripts. The main role of this PowerShell scripts is to use Power BI REST API and PowerShell Modules to deploy Datasets and Reports to chosen workspaces. All the configuration is injected from DevOps pipelines variables into scripts so values can changes between environments.

Scripts used in the solution has been created for the configuration specified below, they may require some modification if your architecture is different. I have used the same scripts in classic pipelines so there is more details available in my previous post.

Implementation

Our deployment pipeline is going to be composed of 3 stages:

- BUILD – collects artefacts from source control

- DEV – Release to Dev Power BI environment

- TEST – Release to Test Power BI environment

This approach is not going to split build and release into separate pipeline although this can be achieved if required. In this example I used single pipeline to store both stages for the simplicity. The consequence of that is that all the stages are going to be deployed automaticaly.

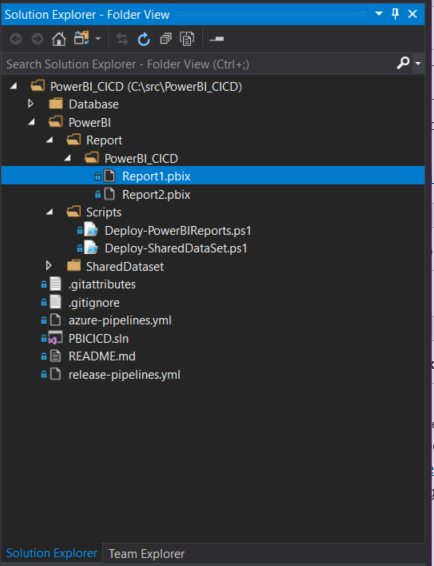

Before we start we need to put solution with all the code in the source control. I preffered choice is to use DevOps GIT.

I organised my solution like below to separate scripts, shared datasets and Reports. Raports are additionaly grouped by the Power Workspace Folder – e.g. reports in “PowerBI_CICD” would be deployed to “PowerBI_CICD_Dev” and “PowerBI_CICD_Test” workspace.

To create new pipeline we need to navigate to DevOps portal and follow below steps:

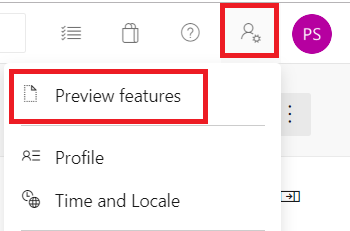

- Switch Multistage pipelines in Preview Option for you DevOps account – This option can be enabled in top right corner of your DevOps portal like on the picture below. It may require administrator privileges on your DevOps project. When you select Preview Features new tab will open. We need to enable option called “Multistage pipelines”.

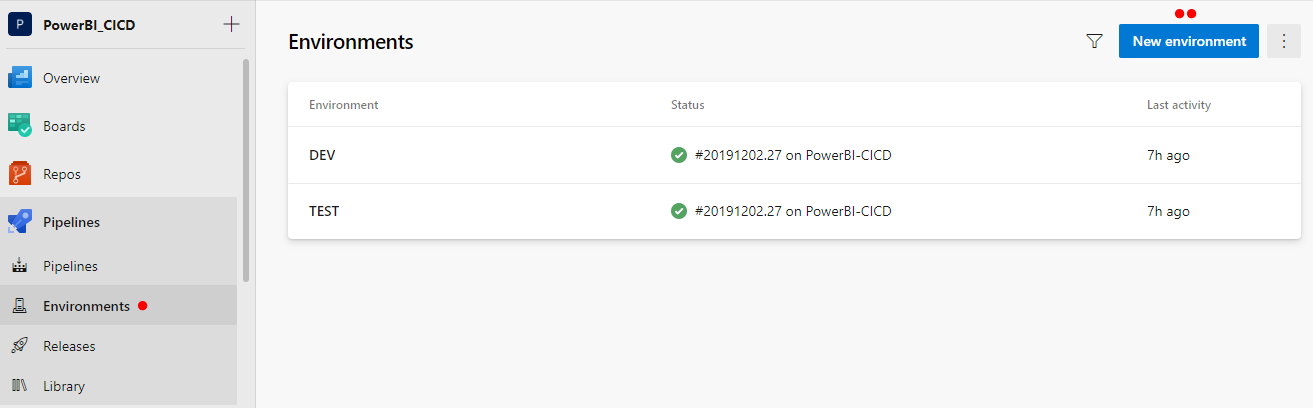

- Create empty environments for DEV and TEST

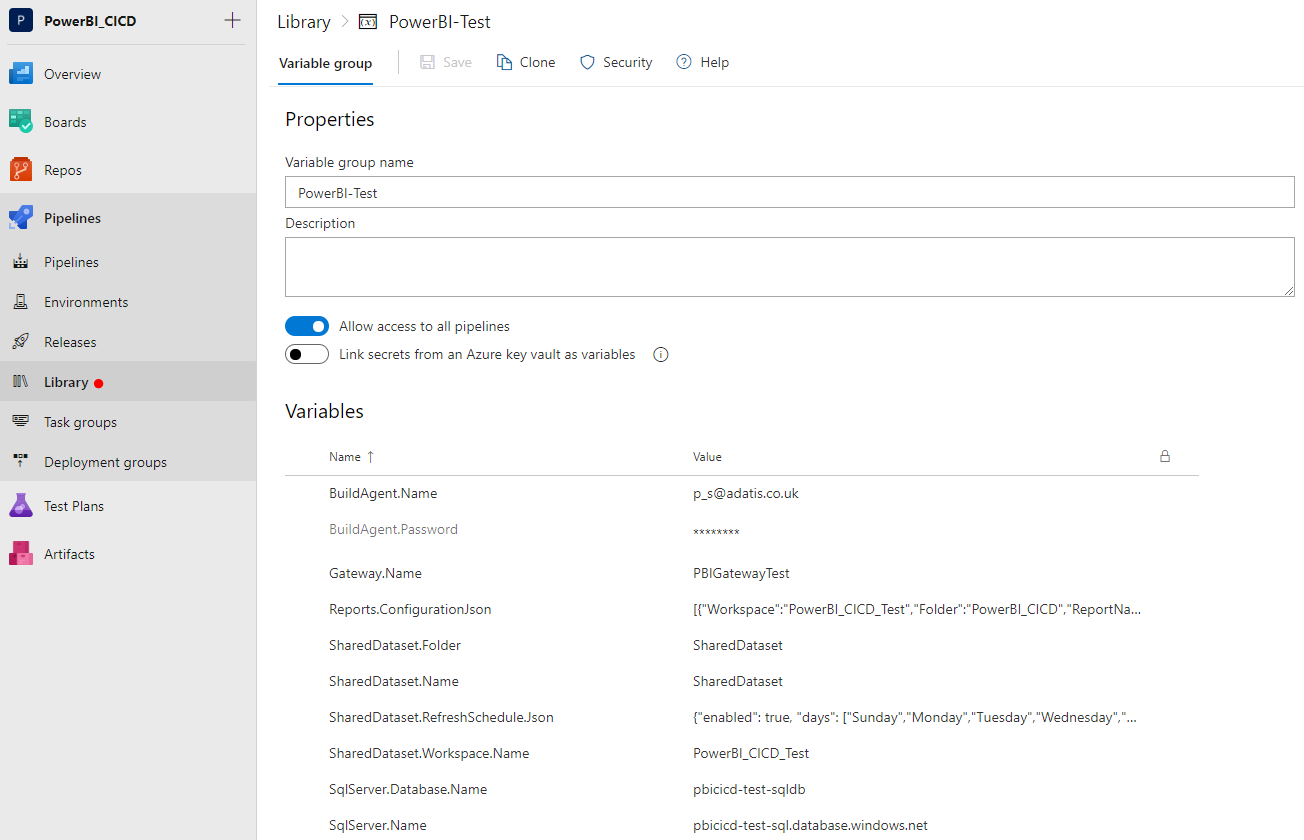

This step would allow us to group deployments and also to configure approval process if required. At the moment Environments allows to setup Azure Kubernetes (k8s) only as a target resource. Since we are not using k8s service, we are going to chose option to add at later stage (none – no resources attached). To create new environment we need to navigate to Pipelines/Environments section. - Define new variables groups

Names of the variables in Variable Groups need to be exactly the same as specified in the YAML document. There should be single variable group per each environment following naming convention PowerBI-{env}.

This is a list of all variables that need to be defined.- BuildAgent.Name – this is built agent name that is set as an admin on the Power BI Servcie. It requires permissions to list and modify workspaces

- BuildAgent.Password – this is password for the build agent

- Gateway.Name [Optional]– If we use gateway for the connection, this defines name of the gateway that we connect our dataset to. It does not create gateway if it does not exist

- Reports.ConfigurationJson – this is a JSON array that defines configuration for the reports deployment – only reports listed in the json will be deployed (but all reports that has dependencies will be re-binded into new dataset)

[{ "Workspace":"<Workspace.Name>", "Folder":"<Visual.Studio.Report.Root.Folder>", "ReportName":"<Report.Name>" }]SharedDataset.Folder – this defines folder that Dataset pbix file is present in

- SharedDataset.Name – this defines name of the shared Dataset

- SharedDataset.RefreshSchedule.Json – defines refresh schedule in form of json object e.g.

{"enabled": true, "days": ["Sunday","Monday","Tuesday","Wednesday","Thursday","Friday","Saturday"],"times": ["06:00"],"localTimeZoneId": "UTC", "notifyOption": "mailNotification"} - SharedDataset.Workspace.Name – this defines Workspace for shared dataset

- SqlServer.Database.Name – This defines database gateway connects to

- SqlServer.Name – This defines server name that gateway connects to

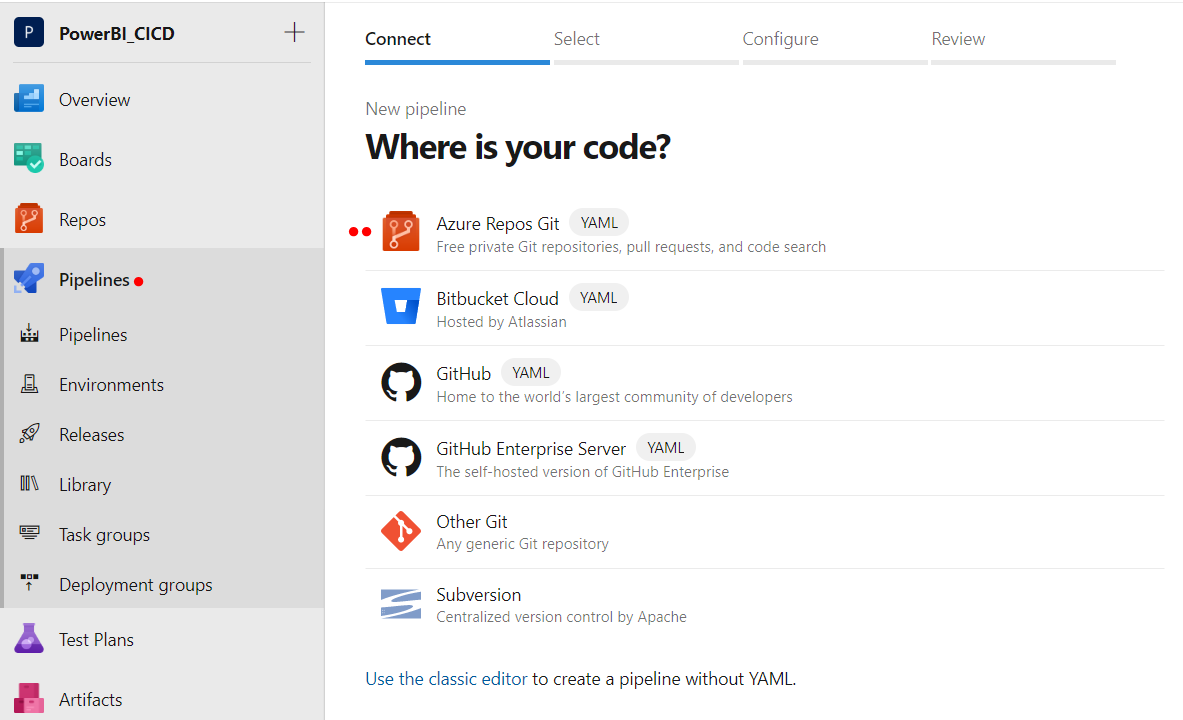

- Navigate to Pipelines and Create new YAML pipeline

When creating new pipeline -choose Azure Repos Git (YAML) source control. Then in the select section choose your project repository.

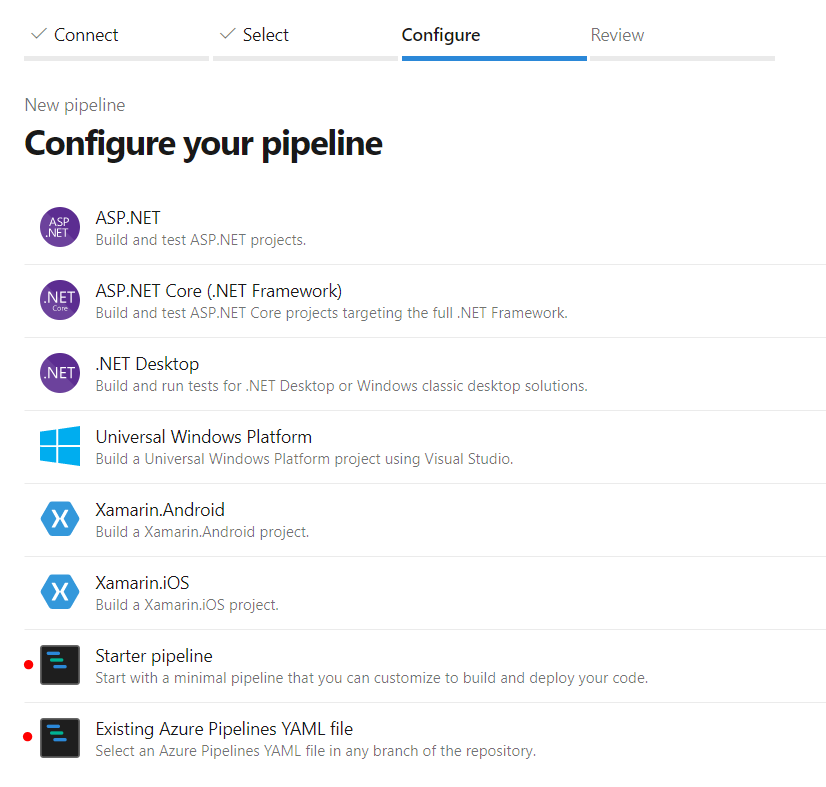

Next step is to configure one of the available options: existing pipeline by navigating to azure-pipelines.yml or creating new empty pipeline and replacing YAML code. If you used demo solution provided in this blog you can reference existing file.

If you preferer to create new pipeline, replace initial YAML code with this:## Adds CICD Trigger via Pull Request pr: branches: include: - master paths: include: - PowerBI/* pool: vmImage: 'vs2017-win2016' ## BUILD ### stages: - stage: BUILD jobs: - job: "Build" steps: - task: CopyFiles@2 inputs: SourceFolder: '$(Build.SourcesDirectory)/PowerBI/' Contents: '**' TargetFolder: '$(build.artifactstagingdirectory)/PowerBI' - task: PublishBuildArtifacts@1 inputs: PathtoPublish: '$(Build.ArtifactStagingDirectory)/PowerBI' ArtifactName: 'PowerBI' publishLocation: 'Container' ### RELEASE - DEV ### - stage: DEV variables: - group: PowerBI-Dev # Variable Group used for PowerBI Deployments jobs: - deployment: DeployWeb displayName: deploy Web App pool: vmImage: 'vs2017-win2016' environment: 'DEV' strategy: runOnce: deploy: steps: - task: DownloadPipelineArtifact@2 displayName: 'Download artefacts' inputs: artifact: 'PowerBI' path: $(Build.SourcesDirectory)\bin # Remarks: Deployment of Shared Dataset in DEV is disabled #- task: PowerShell@2 # displayName: 'Deploy Shared Dataset' # inputs: # filePath: '$(System.DefaultWorkingDirectory)\bin\Scripts\Deploy-SharedDataSet.ps1' # arguments: '-PowerBIFilePath "$(System.DefaultWorkingDirectory)\bin\$(SharedDataset.Folder)\$(SharedDataset.Name).pbix" -WorkspaceName "$(SharedDataSet.Workspace.Name)" -SharedDatasetName "$(SharedDataset.Name)" -BuildAgentLogin "$(BuildAgent.Name)" -BuildAgentPassword "$(BuildAgent.Password)" -SourceSqlServer "$(SqlServer.Name)" -SourceDatabase "$(SqlServer.Database.Name)" -GatewayName "$(Gateway.Name)" -InstallLibraries "True"' - task: PowerShell@2 displayName: 'Deploy Reports' inputs: filePath: '$(System.DefaultWorkingDirectory)\bin\Scripts\Deploy-PowerBIReports.ps1' arguments: '-PowerBIDirectory "$(System.DefaultWorkingDirectory)\bin\Report" -DatasetWorkspaceName "$(SharedDataSet.Workspace.Name)" -DatasetName "$(SharedDataset.Name)" -BuildAgentLogin "$(BuildAgent.Name)" -BuildAgentPassword "$(BuildAgent.Password)" -ReportsToDeploy ''$(Reports.ConfigurationJson)''' ### RELEASE - TEST ### - stage: TEST variables: - group: PowerBI-Test # Variable Group used for PowerBI Deployments jobs: - deployment: DeployWeb displayName: deploy Web App pool: vmImage: 'vs2017-win2016' environment: 'TEST' strategy: runOnce: deploy: steps: - task: DownloadPipelineArtifact@2 displayName: 'Download artefacts' inputs: artifact: 'PowerBI' path: $(Build.SourcesDirectory)\bin - task: PowerShell@2 displayName: 'Deploy Shared Dataset' inputs: filePath: '$(System.DefaultWorkingDirectory)\bin\Scripts\Deploy-SharedDataSet.ps1' arguments: '-PowerBIFilePath "$(System.DefaultWorkingDirectory)\bin\$(SharedDataset.Folder)\$(SharedDataset.Name).pbix" -WorkspaceName "$(SharedDataSet.Workspace.Name)" -SharedDatasetName "$(SharedDataset.Name)" -BuildAgentLogin "$(BuildAgent.Name)" -BuildAgentPassword "$(BuildAgent.Password)" -SourceSqlServer "$(SqlServer.Name)" -SourceDatabase "$(SqlServer.Database.Name)" -GatewayName "$(Gateway.Name)" -InstallLibraries "True"' - task: PowerShell@2 displayName: 'Deploy Reports' inputs: filePath: '$(System.DefaultWorkingDirectory)\bin\Scripts\Deploy-PowerBIReports.ps1' arguments: '-PowerBIDirectory "$(System.DefaultWorkingDirectory)\bin\Report" -DatasetWorkspaceName "$(SharedDataSet.Workspace.Name)" -DatasetName "$(SharedDataset.Name)" -BuildAgentLogin "$(BuildAgent.Name)" -BuildAgentPassword "$(BuildAgent.Password)" -ReportsToDeploy ''$(Reports.ConfigurationJson)'''This single pipeline that would contain build and release stages in a single pipeline. It triggers automatically when pull request is made to the master branch. All the stages will execute synchronously in order of BUILD, DEV, TEST. Previous stage need to complete successfully before the next one starts. This pipeline will run deployment in continuous integration mode. It is not possible enable manual deployment for chosen stage at this time. We could achieve similar effect by separating build and release stages into separate YAML pipelines and configure dependencies between files, but this is outside of the scope of this blog.

Each release stage has- reference to Variable Groups (PowerBI-Dev, PowerBI-Test). Scripts variables are substituted during compilation time.

- reference to the Environment that allows us to configure acceptance gates between deployments stages.

- two PowerShell task to run deployment scripts

Permission requirements

- Account used in PowerShell scripts executed by the pipeline need Power BI Web admin’s permissions level

- Admin access to DevOps that allows enabling preview features

Limitations

- All the limitations for REST API, Power BI PowerShell modules described in my previous post still apply.

- Manual Deployment of the stages is not available in a single pipeline YAML file

Summary

DevOps YAML Pipelines provide easy and quick way of deployment of your Power BI objects to Power BI service. This approach provide some significant advantages over classic pipelines:

- storing pipeline definition as a code in your source control repository

- simplifying process of uploading you pipelines to DevOps portal

YAML pipelines should be first choice for solutions that required to be setup on different tenancies e.g. template deployed by consultancy firm on the client side.

It is worth to remember that YAML pipelines reasonable new, some of the features are still under development. Many of the features that are available in classic mode have not been migrated yet and some of them are only available in preview mode.

References

You can download all materials from this blog from here, including solution template of the solution.

- PowerBI CICD Solution

- YAML release pipelineazure-pipelines

- Power BI CI/CD with DevOps YAML Pipelines blog post

Does this still work? I was trying to do much the same thing, but I get a HTTP 400 from any attempt to deploy a .pbix referencing a shared dataset to the PBI service, which seems to make sense in light of the final bullet listed here https://docs.microsoft.com/en-us/power-bi/desktop-report-lifecycle-datasets#limitations-and-considerations

Hi Hans.

I have not retested the code since i wrote it. At the time of writing the blog It was working just fine with the shared dataset. To run this scripts you need to have administrator rights on your Power BI tenant, and i believe that this is what your are missing.

Let me explain how I overcame that limitations that Microsoft is pointing out in the blog that you pointed out.

I generally divided this deployment into two steps: Deployment of the shared dataset and deployment of the reports. I am going to touch only deployment of the dataset here. When Dataset is deployed by Powershel for a moment of the deployment you will have 2 datasets in a single workspace existing under the same name, but different GUIDs. Script will check all reports that are connected to the shared dataset and rebind all the reports from old dataset to new dataset and then old dataset gets deleted. In this case shared dataset gets updated. The down side of this is that dataset need to be refreshed after.

Also keep in mind that deployment of the shared dataset should not happen to DEV environment as it breaks shared dataset GUIDs that your reports are using for the live connection when you connect to them from dev pbix files. It is fine to deploy to Test and Prod this way.

It does indeed still work great! You were right – it took me too long to realise that the referenced instance of the dataset must exist in the service, and be visible to the deployment agent. Thanks!

Hi! Nice article. How can I extend this solution by having a second shareddatasettabular.pbix this time it should be related to azure analysis services. Then I would have mixed reports some getting data from sql and others from a tabular model in azure. How would you change the code to make that work?

What is the ‘build agent’ mentioned here?

Hi Riddhi. By Build Agent I mean the virtual machine the code is running on. If you are using DevOps it can either be (Micrososft Hosted) or you create your own VM (and install some software). I can also use this term to describe the Service Principal account that pipelines run under and this build agent would need to have permission to make changes to the resources that you change e.g. Power BI Namespace.(technically that is not 100% correct).