Often, when building data analytics solutions, we are asked to integrate with third-party tools that are not part of the Microsoft ecosystem. In this 3 part blog series, I’ll demonstrate how to send data to Salesforce and NetSuite using Azure Data Factory (ADF).

- Ingest your data to Salesforce Marketing Cloud using Azure Data Factory

- Ingest your data to Salesforce Service Cloud using Azure Data Factory

- Ingest your data to NetSuite using Azure Data Factory

NetSuite is a cloud-based Enterprise Resource Planning (ERP) software that helps businesses run more efficiently and cost-effectively.

Scenario

- A NetSuite administrator created a set of custom entities. An entity is split in two objects, Header and Item (eg. Purchase Order Header and Purchase Order Item). You have to build a process in ADF that will select data from Azure SQL DB and ingest it to NetSuite.

Considerations & Limitations

- The copy activity in ADF contains a considerable list of connectors to Microsoft and third-party tools (list available here), however, at the time of writing this article, no connector to NetSuite has been developed. The alternative suggested in this blog is to use the SSIS Integration Toolkit for NetSuite from KingswaySoft (details here)

- To run SSIS packages in ADF, we have to provide an Azure SSIS Integration Runtime (SSIS IR). Further details can be found here

- NetSuite governs the web service traffic sent to its data center by limiting the number of records per request by the type of request (Add vs.Update vs.Search) as well as time of day (peak vs.off peak). Upsert requests are limited to 100 records, therefore, the the data has to be split in batches

Solution

The proposed pattern uses 2 ADF pipelines and one/many SSIS package:

- NetSuite Transfer Parent

- NetSuite Transfer Child

- NetSuite Entity

Marketing Cloud Parent

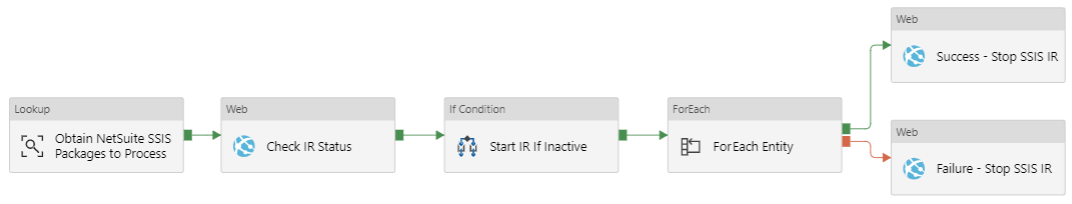

*Obtain NetSuite SSIS Packages to Process – Obtains the list of SSIS packages that will send data to NetSuite

*Check IR Status – Obtains the status of the SSIS IR. API details here

*Start IR If Inactive – If the status of the SSIS IR is different than Started, then a Web activity starts it using a REST API. Details here

*ForEach Entity – Contains an Execute Pipeline activity that invokes the NetSuite Transfer Child pipeline. The batch count can be changed to increase/decrease the number of concurrent executions

*Success – Stop SSIS IR – If the pipeline executed successfully, the SSIS IR is stopped using a REST API. Details here

*Failure – Stop SSIS IR – If there was errors during the execution of the pipeline, the SSIS IR is stopped using a REST API. Details here

NetSuite Transfer Child

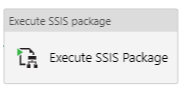

*Execute SSIS Package – Execute the SSIS packages

NetSuite Entity

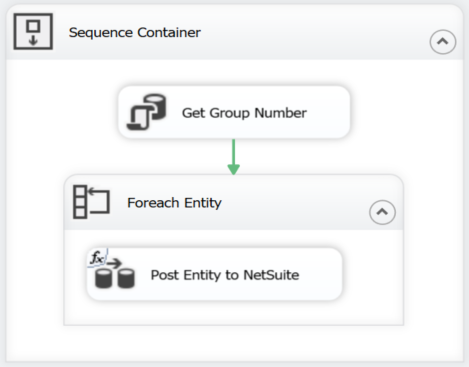

*Get Group Number – Calculates the size of each batch and stores the values in a GroupNumber table (Eg. Purchase Order Group Number)

*Post Entity to NetSuite – Iterates over the dataflow detailed below as many times as the number of calculated groups

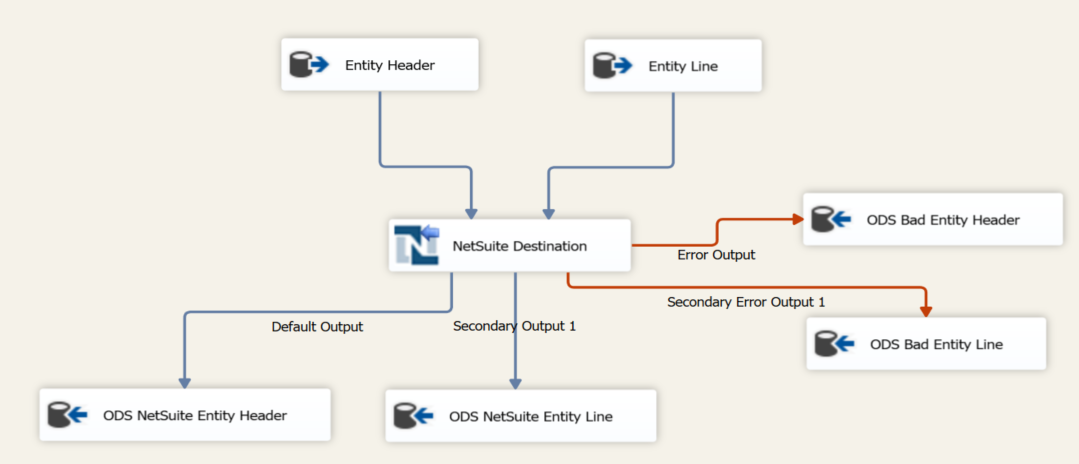

**Entity Header – Receives the group number as a parameter and retrieves the entity header details. The maximum number of headers per execution can’t be greater than 100 if running an upsert

**Entity Line – Receives the group number as a parameter and retrieves the entity line details. Each line is associated to a header from the same group

**NetSuite Destination – This component is part of the SSIS Integration Toolkit for NetSuite. It receives a primary and secondary input, performs an insert/update in NetSuite and returns an Internal Id for the records that were successfully posted and error details for the ones that didn’t succeed

**ODS Bad Entity Header – Stores the header records that were not added to NetSuite and the error details in a logging table in Azure SQL DB

**ODS Bad Entity Line – Stores the line records that were not added to NetSuite and the error details in a logging table in Azure SQL DB

**ODS NetSuite Entity Header – Stores the header records that were added to NetSuite and the generated internal Id in a logging table in Azure SQL DB

**ODS NetSuite Entity Line – Stores the line records that were added to NetSuite and the generated internal Id in a logging table in Azure SQL DB

Introduction to Data Wrangler in Microsoft Fabric

What is Data Wrangler? A key selling point of Microsoft Fabric is the Data Science

Jul

Autogen Power BI Model in Tabular Editor

In the realm of business intelligence, Power BI has emerged as a powerful tool for

Jul

Microsoft Healthcare Accelerator for Fabric

Microsoft released the Healthcare Data Solutions in Microsoft Fabric in Q1 2024. It was introduced

Jul

Unlock the Power of Colour: Make Your Power BI Reports Pop

Colour is a powerful visual tool that can enhance the appeal and readability of your

Jul

Python vs. PySpark: Navigating Data Analytics in Databricks – Part 2

Part 2: Exploring Advanced Functionalities in Databricks Welcome back to our Databricks journey! In this

May

GPT-4 with Vision vs Custom Vision in Anomaly Detection

Businesses today are generating data at an unprecedented rate. Automated processing of data is essential

May

Exploring DALL·E Capabilities

What is DALL·E? DALL·E is text-to-image generation system developed by OpenAI using deep learning methodologies.

May

Using Copilot Studio to Develop a HR Policy Bot

The next addition to Microsoft’s generative AI and large language model tools is Microsoft Copilot

Apr