Databricks Delta Lake is an open source storage layer, providing solid data reliability and innovative transformation possibilities to big data solutions founded in data lake technology. The ability to master transactions natively in the file system gives developers the ability to work more intuitively and flexibly with their data whilst instilling consistency and resilience, no matter the size. Delta Lake’s library should be of interest to anyone that deals with big data or creates modern data warehouse solutions and would like to learn the ways to solve common data lake challenges. In this bitesize blog, I’d like to introduce the basics of Delta Lake, covering the problems that this product addresses and the key features in its use.

Challenges in Harnessing Big Data

DData lakes play a leading role in the Modern Data Warehouse architecture. As a scalable, centralised repository of both structured and unstructured data, they facilitate the collection, manipulation and mining of big data. There are, however, some technical hurdles that so far have been difficult to overcome:

- Consistency problems with concurrent reading and writing into datalake

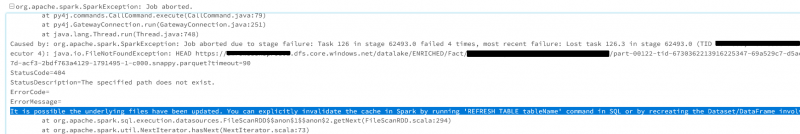

There is no isolation between transactions running simultaneously on the data lake, which often leads to IO failure (example below) or readers seeing garbage data during writes. To tackle the problem, developers must build a costly workaround to ensure that readers the consistent data during writes/updates.

- Failing solutions due to low data quality

There is no automatic schema and data validation mechanism that prevents bad source data from getting into the solution. Bad source data can lead to a range of issues, from failures in data transformations through to pollution of your data warehouse, with the latter being very difficult to fix.

- Increasing processing time of big data

High growth in the data on a data lake, which can be particularly prevalent for streaming jobs, can result in lengthy times required for handling metadata operations and completing data transformations.

Databricks Delta Lake

To address the above obstacles, Apache Spark team created Databricks Delta Lake as a solution. Delta Lake plays a role of a transactional storage layer that runs on top of existing data lake, such as Azure Data Lake Storage (ADLS) Gen2, serving as a unified data management system without replacing the current storage system.

The main concept behind the Delta Lake is that the data being transform is stored in versioned Parquet files, and that the Spark engine maintains a transactional log, called the Delta Log. The log not only keeps information about each transaction (action) performed on the data, but also holds basic data statistics, details of versioned file like the schema, timestamps for any changes, and size/partition information. In other words, the Delta Log serves as a single source of truth of all changes made to the table and is saved in JSON format (click here to read more about delta log).

Key features of Delta Lake

Implementing this additional storage layer helps significantly tackling the problems of data reliability, overall performance and complexity. Key features of Delta Lake include:

- ACID transactions

The atomicity of transactions is guaranteed as every action performed on the table is tracked in the transactional Delta Log with a specific serial order. Operations performed on the data lake are either fully, or do not complete at all. Thanks to the serializable isolation level, it is possible to concurrently write to and read from the same directory or table, without the risk of inconsistency. Only the latest snapshot that existed at the time of reading is loaded in the query (here more about it)

- Schema management

To prevent unintentionally pollution of target table the Delta Lake validates the schemas before the write action (schema enforcement). Columns of the target table that are not present in the DataFrame are set to null. On the contrary, if the DataFrame has extra columns not present in the target table, the write operation will raise an exception to let the developer know about the incompatibility. It is also possible to enable making changes to the target schema automatically (schema evolution) (click here to read more about it)

- Scalable Metadata Handling

Metadata information of tables and files is stored in the Delta Log. As a result, the metadata can be accessed in the same mechanism as the data, and can be easily handled by the distributed processing power of Spark, which is helpful with extremely large tables

- Improved performance

Delta Lake employs several optimisation techniques that, according to Apache Spark, improve query performance of 10 to 100 times compared with non-delta format. Key techniques include Data Skipping (reading only relevant subsets of data thanks to statistics maintained in the Delta Log), Data Caching (the Delta Lake engine automatically caches mostly used data), and Compaction (coalescing small files into larger ones to achieve improved speed of read queries) (click here to read more about it)

- Upserts and deletes

In contrast to standard Apache Spark, the Delta Lake supports merge, update and delete operations. These are great for building complex use cases like Slowly Changing Dimension (SCD) operations, Change Data Capture or upserts from streaming queries (see examples here and here).

- Time travel

With the transactional Delta Log and versioned Parquet files, it is possible to both track the history changes and to load data from a specific point in time. You can query the Delta table specifying the version number or the timestamp and audit the historical changes, revert and rollback an unwanted change or job run.

- Unified batch and streaming sink

The Delta Lake can be efficiently used as a streaming sink (and as a streaming source too) with Apache Spark’s structured streaming. Having transactional log Delta Lake guarantees exactly-once processing of streaming and batch data, even when there are other streams or batch queries running concurrently against the table (click here to read more about it)

How to start using Delta Lake format?

To initialise a Delta Lake, when writing out data for the first time to a data lake use the “delta” format instead of standard “parquet” . Having done so, the Delta Log is created in the same directory and certain Delta features are added to the Spark engine.

myDataFrame.write.format("delta").save(LocationConnectionString)

In case of already existing Parquet table, we can convert it to Delta format using following logic:

from delta.tables import * # converts format to Delta, creates Delta Log folder and create JavaObject deltaJavaObject = DeltaTable.convertToDelta(spark, "parquet.`" + LocationConnectionString + "`") # creates DeltaTable class object on which we can use methods like 'update', 'merge' myDeltaTable = DeltaTable.forPath(spark, LocationConnectionString) # creates spark dataframe from DeltaTable object myDeltaTableDF = myDeltaTable.toDF()

Summary

Delta Lake was introduced to public more than two years ago, but it is only now gaining in popularity among both developers and large business enterprises. By enabling in big data management two fundamental features, ACID transactions and automatic data indexing, Delta has paved the way to simplify the complex data warehouse solutions and to deliver faster and more reliable architectures.

The next article will contain several examples that should give you a brief overview of the application of Delta Lake features and benefits. If you want to learn about Structured Streaming using Delta Lake, read a great article of Jose Mendes – Lambda vs Azure Databricks Delta Architecture.

If you would like to know how we could help you with your data, please get in touch.

Introduction to Data Wrangler in Microsoft Fabric

What is Data Wrangler? A key selling point of Microsoft Fabric is the Data Science

Jul

Autogen Power BI Model in Tabular Editor

In the realm of business intelligence, Power BI has emerged as a powerful tool for

Jul

Microsoft Healthcare Accelerator for Fabric

Microsoft released the Healthcare Data Solutions in Microsoft Fabric in Q1 2024. It was introduced

Jul

Unlock the Power of Colour: Make Your Power BI Reports Pop

Colour is a powerful visual tool that can enhance the appeal and readability of your

Jul

Python vs. PySpark: Navigating Data Analytics in Databricks – Part 2

Part 2: Exploring Advanced Functionalities in Databricks Welcome back to our Databricks journey! In this

May

GPT-4 with Vision vs Custom Vision in Anomaly Detection

Businesses today are generating data at an unprecedented rate. Automated processing of data is essential

May

Exploring DALL·E Capabilities

What is DALL·E? DALL·E is text-to-image generation system developed by OpenAI using deep learning methodologies.

May

Using Copilot Studio to Develop a HR Policy Bot

The next addition to Microsoft’s generative AI and large language model tools is Microsoft Copilot

Apr