One of the many exciting announcements made at MSBuild recently was the release of the new Cosmos DB Bulk Executor library that offers massive performance improvements when loading large amounts of data to Cosmos DB (see https://docs.microsoft.com/en-us/azure/cosmos-db/bulk-executor-overview for details on how it works). A project I’ve worked on involved copying large amounts of data to Cosmos DB using ADF and we observed that the current Cosmos DB connector doesn’t always make full use of the provisioned RU/s so I am keen to see what the new library can offer and look to see if our clients can take advantage of these improvements.

In this post I will be doing a comparison between the performance of the Cosmos DB connector in ADF V1, ADF V2 and an app written in C# using the Bulk Executor library. As mentioned in the Microsoft announcement, the new library has already been integrated into a new version of the Cosmos DB connector for ADF V2 so the tests using ADF V2 are also using the Bulk Executor library.

All tests involved copying 1 million rows of data from an Azure SQL DB to a single Cosmos DB, the scaling settings used for the resources involved are:

- Cosmos DB Collection – partitioned by id, 50000 RU/s

- Azure SQL DB – S2 (50 DTUs)

Each test was repeated 3 times to enable an average time taken to be calculated.

The document inserted into each the database looked like the below:

Test 1 – ADF V1

To set up the ADF V1 test I used the Copy Data Wizard to generate a pipeline that would copy data from my Azure SQL DB to Cosmos DB. I then executed the pipeline 3 times, recreating the Cosmos DB collection each time.

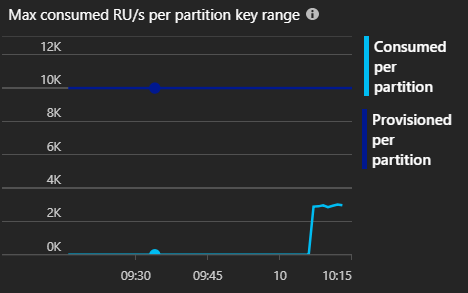

Each test behaved in a similar way, the process slowly ramped up to 100,000 requests per minute and sustained that throughput until completion. The tests only consumed around 3,000 RU/s out of the 10,000 RU/s provisioned to each partition in the collection (each collection was created with 5 partitions).

The results of the test were:

- Run 1 – 677 seconds

- Run 2 – 641 seconds

- Run 3 – 513 seconds

- Average – 610 seconds

The performance increased after each run with run 3 taking 513 seconds, nearly 3 minutes quicker than the first test. I can’t explain the differences in time taken however, it seems that ADF progressively ramped up the throughput quicker after each run so it may be down to scaling within the ADF service itself.

Test 2 – ADF V2

To set up the ADF V2 test I again used the Copy Data Wizard to generate the pipeline. I then executed the pipeline 3 times, recreating the Cosmos DB collection each time.

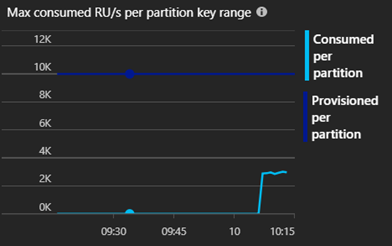

ADF V2 represents a massive improvement over V1 with a 75% increase in performance. The connector used more than the provisioned throughput meaning some throttling occurred however, this means that the collection could have been scaled even further to obtain higher performance.

Interestingly the ADF V2 requests didn’t appear on the number of requests metric shown in the Azure Portal so I’m unable to look at how many requests ADF V2 was able to sustain. I’m unsure of the reason for this however, it could be something like ADF using the direct connection mode to Cosmos DB rather than connecting through the gateway meaning the requests aren’t counted.

The results of the test were:

- Run 1 – 163 seconds

- Run 2 – 158 seconds

- Run 3 – 156 seconds

- Average – 159 seconds

The performance of ADF V2 was more consistent that V1 and remained reasonably steady across all tests.

Test 3 – C# w/ Bulk Executor Library

To set up the C# test I wrote a quick C# console application that uses the Bulk Executor library, the application was running on my local machine rather than within Azure so there will obviously be a performance hit from the additional network latency. The source code for the application can be found at https://github.com/benjarvis18/CosmosDBBulkExecutorTest.

The results of the test were:

- Run 1 – 240 seconds

- Run 2 – 356 seconds

- Run 3 – 352 seconds

- Average – 316 seconds

The performance of the C# application is less consistent however, this is probably due to the network from my local machine to Azure. My application is also not very scalable as it is loading the whole dataset into memory rather than streaming it, as would be required with a larger dataset. The actual code itself is probably also not as optimised as it could be.

Overall however, the performance of my C# application was still 50% better than ADF V1.

Comparison

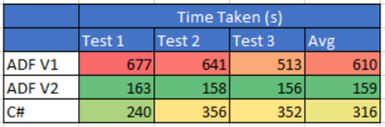

The final results of the tests are below:

As above, ADF V2 is the clear winner in the test offering a 75% increase in performance when compared to ADF V1. This represents a huge performance gain and could provide some significant costs savings for users that are loading large amounts of data into Cosmos DB. My C# application offered 50% better performance than ADF V1, running outside of Azure without any optimisation so the performance benefits of the new library are significant.

The new library is an exciting development for Cosmos DB and allows us to fully utilise the capabilities it has to offer when dealing with large amounts of data. I look forward to making use of these benefits in projects, especially the significant improvements in ADF V2!

As always, if you have any questions or comments please let me know.

Introduction to Data Wrangler in Microsoft Fabric

What is Data Wrangler? A key selling point of Microsoft Fabric is the Data Science

Jul

Autogen Power BI Model in Tabular Editor

In the realm of business intelligence, Power BI has emerged as a powerful tool for

Jul

Microsoft Healthcare Accelerator for Fabric

Microsoft released the Healthcare Data Solutions in Microsoft Fabric in Q1 2024. It was introduced

Jul

Unlock the Power of Colour: Make Your Power BI Reports Pop

Colour is a powerful visual tool that can enhance the appeal and readability of your

Jul

Python vs. PySpark: Navigating Data Analytics in Databricks – Part 2

Part 2: Exploring Advanced Functionalities in Databricks Welcome back to our Databricks journey! In this

May

GPT-4 with Vision vs Custom Vision in Anomaly Detection

Businesses today are generating data at an unprecedented rate. Automated processing of data is essential

May

Exploring DALL·E Capabilities

What is DALL·E? DALL·E is text-to-image generation system developed by OpenAI using deep learning methodologies.

May

Using Copilot Studio to Develop a HR Policy Bot

The next addition to Microsoft’s generative AI and large language model tools is Microsoft Copilot

Apr