Azure Databricks is a cloud-based service that allows for Spark jobs to be ran against large amounts of data in a notebook-based workspace. This service has a huge amount of functionality, combining the constantly evolving service provided by Databricks with the flexibility and scale of computing power that Azure is renowned for. Alongside traditional uses for PySpark, such as data cleaning and processing within ETL processes, machine learning and AI applications are also very well supported by Azure Databricks.

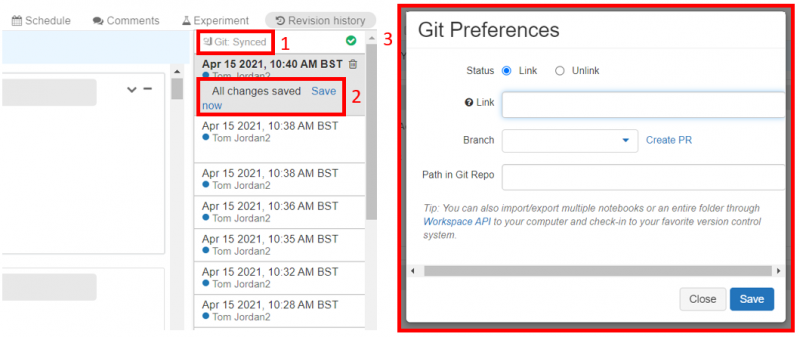

When multiple developers are working on Databricks notebooks, which can have complicated structures and queries being created, the need to have a robust source control mechanism is all too apparent. Until recently, this was performed by the GitHub integration within the History panel, which while serving its purpose lacked much of the functionality one would expect. Repos, which is Databricks’ new source control feature, aims to provide Git integration with a more mature UI and service.

Existing Source Control Process

As mentioned, the existing Git integration takes place within the History panel of Databricks. After linking a Git repository to the Databricks workspace, each notebook must be linked to the desired branch manually for completing the work associated. Once this work is complete, the changes to each notebook must then be committed to the remote branch manually, from which a pull request can be used to move the updates into the main branch.

There are several issues associated with this mechanism. Firstly, if the branch requires work on several different notebooks, it is easy to forget to commit the changes in each of those notebooks. Additionally, it is also easy to link the different notebooks to different branches, which could cause issues when sending the changes to the separate remote branches during the commits. If multiple developers are working at the same time and making these mistakes, it isn’t hard to imagine the headaches that could rise in terms of source control!

Repos

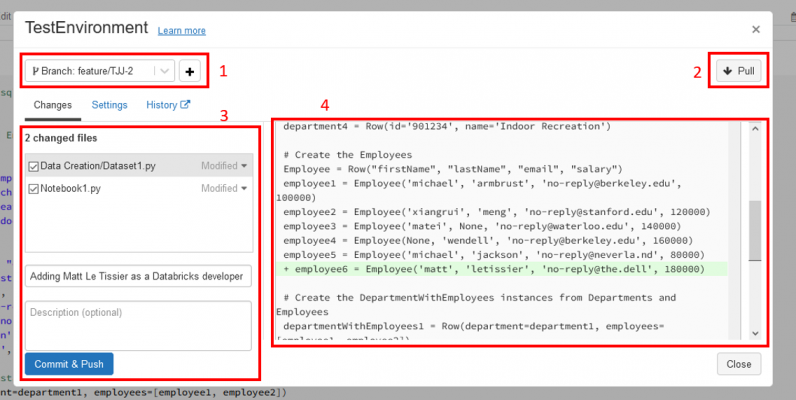

The Repos functionality aims to avoid these issues by providing a mechanism for all notebooks to be linked to a branch, have their changes committed and then pushed to the remote branch in single operations. This is available in Azure Databricks in Public Preview, and provides a separate UI away from the History panel to achieve this.

In order to use this feature, it firstly must be enabled within the workspace. This can be achieved through the Advanced tab of the Admin Console. Once this has taken place, a Repos icon will be present within the workspace sidebar. Git integration must also be correctly configured for the use case, which can be achieved within User Settings.

Once these initial steps are complete, one can clone the desired Git repository in order to begin working on the codebase. Clicking on the Repos icon in the navigation bar, the Add Repo button then allows the Git repository to be cloned via clicking ‘Clone remote Git repo’ and entering the repository’s URL. This will only pull in existing Databricks notebooks in the repo, which allows for easier navigation.

When working in the notebooks, a new button is added to the left of the notebook name – the button has the notebook’s current branch’s name written on it. This then opens up the Repos UI for controlling source control. The Pull button allows for changes from the remote branch to be applied within the notebooks in the workspace, while the Commit and Push button allows for changes to be sent to the remote branch. Additional functionality includes the ability to commit individual notebooks, and the ability to inspect the changes made to a notebook during development. Changing branches is also a straightforward process within the UI.

How can it be used on projects?

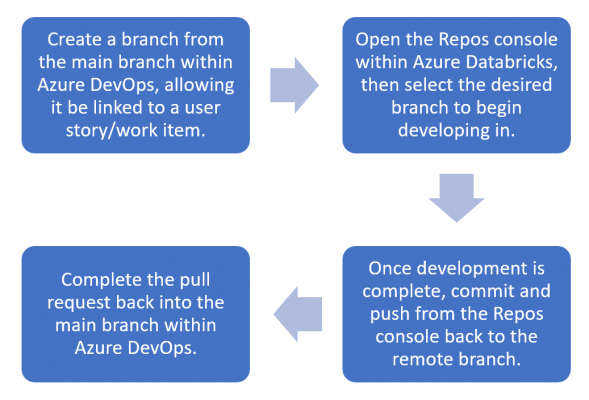

For the Git integration within Visual Studio Adatis has a fairly standardised process for source control, but due to the lack of functionality previously available to Azure Databricks this was not applicable when using this service. However, Repos allows for a much similar approach to be used within Databricks as would be used on Visual Studio. A similar process that could be used is:

- Create the branch within Azure DevOps, allowing for the appropriate user story/work item to be linked for project management purposes. The integration between Azure DevOps and Azure Databricks enables this.

- The workspace can then be synced to the desired branch using the Git dialog within Repos.

- After the required development is completed, the Git dialog can then be committed and pushed to the remote branch.

- Within Azure DevOps, this can then be pull requested back into the main branch.

Limitations

While Repos is a vast improvement over the existing Git integration offering within Azure Databricks, there are still some limitations to the service. There’s a lack of merge functionality within the Git dialog, meaning that if a branch fell behind the main branch in remote, it wouldn’t be possible to update the branch within Azure Databricks to avoid merge conflicts during a pull request. While this can be handled within Azure DevOps instead, it would be a nice piece of functionality that currently exists within Visual Studio’s Git integration.

Another limitation noticed during my experiences with Repos was that branches that have been deleted in the remote repo don’t seem to be deleted from the list of branches within Repos’ Git integration. The branch search functionality within the UI avoids this being a large issue, however it would be a nice safety feature to avoid accidentally working in a branch that no longer exists in the remote repository.

Conclusion

While not as kitted out as other comparable Git integrations, Repos provides a vast improvement to the existing source control capabilities within Azure Databricks, and is a tool that could easily fit into a typical source control workflow. However, the feature is still in Public Preview, so one may need to check before implementing it within project work for clients.

If you enjoyed this blog, check out our other blogs here.

Introduction to Data Wrangler in Microsoft Fabric

What is Data Wrangler? A key selling point of Microsoft Fabric is the Data Science

Jul

Autogen Power BI Model in Tabular Editor

In the realm of business intelligence, Power BI has emerged as a powerful tool for

Jul

Microsoft Healthcare Accelerator for Fabric

Microsoft released the Healthcare Data Solutions in Microsoft Fabric in Q1 2024. It was introduced

Jul

Unlock the Power of Colour: Make Your Power BI Reports Pop

Colour is a powerful visual tool that can enhance the appeal and readability of your

Jul

Python vs. PySpark: Navigating Data Analytics in Databricks – Part 2

Part 2: Exploring Advanced Functionalities in Databricks Welcome back to our Databricks journey! In this

May

GPT-4 with Vision vs Custom Vision in Anomaly Detection

Businesses today are generating data at an unprecedented rate. Automated processing of data is essential

May

Exploring DALL·E Capabilities

What is DALL·E? DALL·E is text-to-image generation system developed by OpenAI using deep learning methodologies.

May

Using Copilot Studio to Develop a HR Policy Bot

The next addition to Microsoft’s generative AI and large language model tools is Microsoft Copilot

Apr